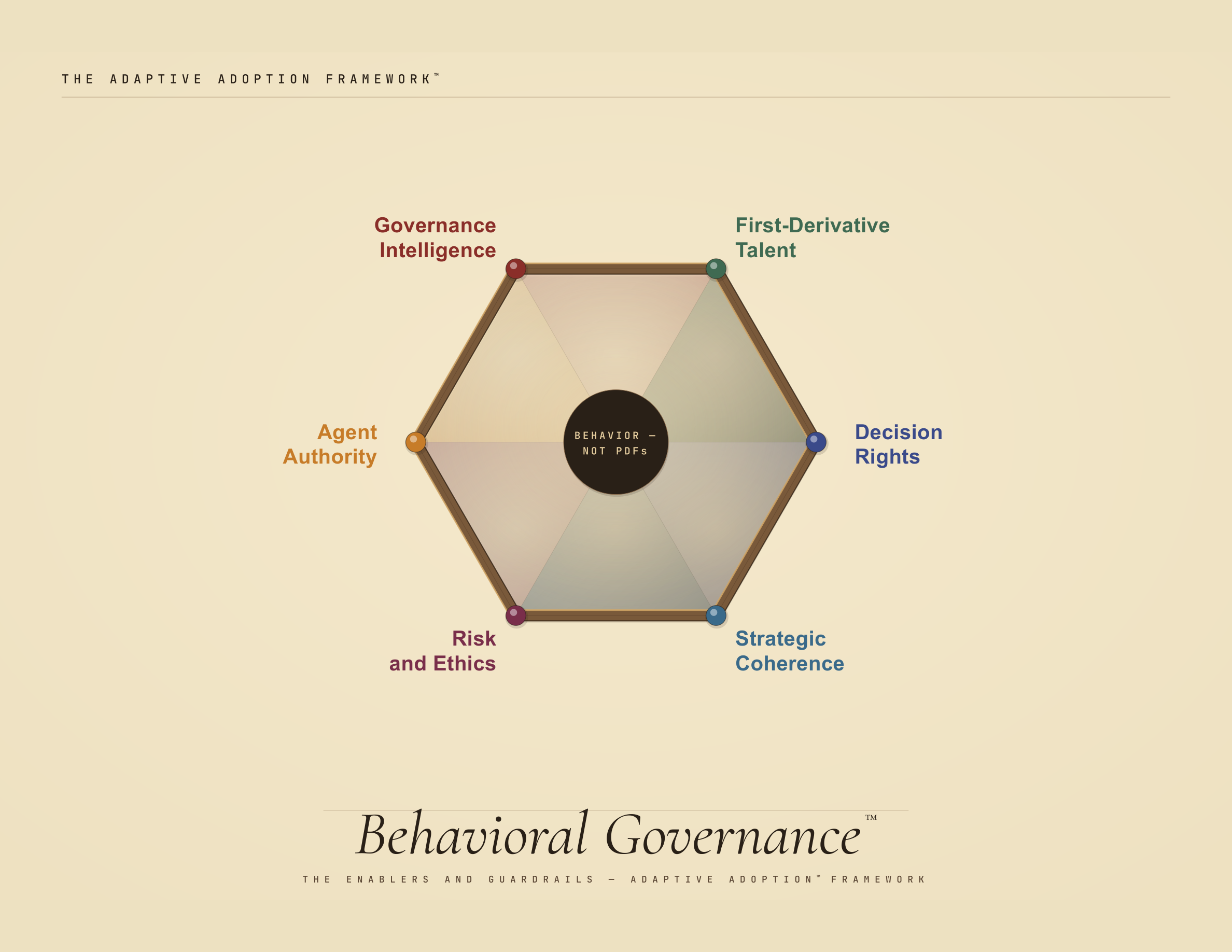

ADAPTIVE ADOPTION™ — BEHAVIORAL GOVERNANCE™

Behavioral Governance

The fatal mistake in all governance frameworks is that they are anti-change frameworks, not change frameworks. Policies and policing. Brakes not accelerators.

Design governance to “go hard and go safely.” Design for behaviors, not pdfs.

Governance is the enabling architecture of adoption. Not compliance. Not policing. Not the committee that says no. The CAIO is the organizational change leader for AI — responsible for adoption that creates value, governance that enables speed, talent that keeps pace, and strategic coherence across an AI estate that no one else has sight of. These six dimensions are what the role requires: the decisions, the risks, the intelligence, the talent, and the integration that turn governance from a brake into an accelerator.

When it comes to governance,

attention is not all you need.

attention is not all you need.

THE CAIO CHANGE AGENDA — MID-2026 OPERATING CONTEXT

Shadow AI vs. safe enablement. Over a third of employees share sensitive data with unsanctioned AI tools. McKinsey: the scaling problem is not employee readiness — it's leaders failing to steer fast enough.

IBM, McKinsey 2025–26

Pilots vs. real workflow redesign. Only 25% have moved 40%+ of pilots into production. Only 1% of leaders call their company mature. Workflow redesign has the biggest EBIT impact — yet only 21% have fundamentally redesigned any workflows.

Deloitte, McKinsey 2025–26

Accountability vs. committee fog. AI governance is jointly owned, with two leaders in charge on average. Maturing organizations are moving from committee-heavy models to clear lines of accountability.

McKinsey, PwC 2025–26

Data trust vs. garbage-in scaling. 45% cite data accuracy or bias as the leading barrier to scaling AI. 42% cite insufficient proprietary data. Companies feel less prepared operationally — infrastructure, data, risk, talent — than they do strategically.

IBM, Deloitte 2025–26

Principles vs. operational controls. Most firms have moved past writing Responsible AI principles. The hard part: translating them into inventory, monitoring, standards, and repeatable controls at scale.

PwC 2025–26

Agents vs. human accountability. Three-quarters of companies plan to deploy agentic AI within two years. Only 21% have a mature governance model for agents. AI stops answering questions and starts taking actions — governance hasn't caught up.

Deloitte, PwC 2025–26

Speed vs. audit / regulatory readiness. EU AI Act broad enforcement begins August 2, 2026. Providers and deployers already need to demonstrate AI literacy. Auditors may assess how AI is governed, documented, and controlled in SOX-relevant processes.

EU AI Act, PwC 2025–26

Board fluency vs. management lag. Nearly half of executives view AI as a major risk area — only 10% of directors do. The oversight gap: boards should treat AI as a full-board strategic issue, not a side-topic.

PwC Board Effectiveness Survey 2025

When management ideas live in PDFs, they become rigid. The tools, diagnostics, and methods behind this framework are open and inspectable.

EXPLORE THE REPO THE DURABLE FRAMEWORK — SIX DIMENSIONS OF BEHAVIORAL GOVERNANCE

STANDARDS

WHAT ENACTED GOVERNANCE LOOKS LIKE

Espoused → Enacted — closing the gap

MEASURES

HOW YOU'D KNOW — THREE-LAYER ASSESSMENT

Self-report · Evidence · Behavioral observation

DIMENSION 1

Decision Rights

“If someone wants to spin up a small pilot, who do they have to ask? If the answer is 'three committees and nine months,' they'll build in the dark.”

← Shadow AI Proliferation · Velocity

Trust link → RIST™ institutional trust. See Leadership Delta D5, Change Agility P3.

STANDARDS — ENACTED GOVERNANCE

Governance Speed Standard

The approval architecture must be faster than the workaround. Gravitee: 81% of teams actively deploying, only 14.4% with full approval. If governance takes months and an agent takes an afternoon, people build in the dark. Governance speed is a design choice.

AI Governance Circle Protocol

Sprint-based governance cadence: decision rights reviewed, contested, and reallocated in response to conditions on the ground — not committee cycles that move at institutional speed while agents multiply at machine speed.

Shadow AI Integration Path

The standard is not “stop shadow AI” — that ship has sailed. The standard: a clear, fast path from shadow to sanctioned. Detection → assessment → integration, not detection → punishment → repeat.

MEASURES — THREE-LAYER ASSESSMENT

Self-Report

“If you wanted to launch a small AI pilot tomorrow, who would you ask? How long would it take?” Diagnostic: “I don't know” or “months” = espoused governance. “I'd just build it” = failed governance.

Evidence Layer

Pilot request → approval time (target: <2 weeks for low-stakes). Shadow AI ratio: sanctioned / total agents. Governance Circle cadence logs. Governance modifications in last 90 days.

Behavioral Observation

Do people go through governance or around it? Key ratio: formal requests vs. informal deployments. If governance channels are quiet but AI usage is growing, governance is being routed around — a design problem, not a people problem.

DIMENSION 2

Agent Authority

“What does the AI get to decide on its own? What emails can it reply to? What transactions can it approve? This cannot be static.”

← Complexity · Velocity · Three-Body Problem

Trust link → RIST™ task trust. See Leadership Delta D5, Change Agility P3.

STANDARDS — ENACTED GOVERNANCE

Dynamic Autonomy Calibration

Agent autonomy calibrated to model reliability — recalibrated as reliability changes (Floridi's oversight calibration). Model improves → dial turns up. New hallucination domain → dial turns down. Calibration is continuous, not annual.

Consequence-Based Autonomy Tiering

Low stakes (drafts, brainstorming): full autonomy, spot-check. Medium (customer-facing, analytical): agent acts, human reviews before delivery. High (financial, legal, reputational): human-in-the-loop mandatory. Tier determined by consequence, not capability. Critical dependency: if underlying data is garbage, autonomy dials down regardless of model quality — authority is a data question before a technology question.

Agent Chain Governance

The authority question: not just “what can this agent do?” but “what can it authorize other agents to do?” Autonomous scope expansion without human decision is the failure mode. Every agent chain needs: scope boundary, escalation trigger, human checkpoint at defined intervals.

MEASURES — THREE-LAYER ASSESSMENT

Self-Report

“Do you know what your AI agents are authorized to do autonomously? Has that scope changed in the last 90 days?” “They do whatever they were set up to do” = ungoverned autonomy.

Evidence Layer

Agent autonomy map: scope per agent + last review date. Autonomy tier distribution. Scope change frequency. Agent chain depth: max chain length without human checkpoint. Orphaned agent count.

Behavioral Observation

When a new model release changes capability, does the organization recalibrate agent autonomy — or does the same scope persist? If agent authority hasn't changed in six months, it's not calibrated — it's frozen.

DIMENSION 3

Risk Intelligence

“Not 'identify and manage risks' — that's structural and boring. What do you DO with risk data? And what do you do when you don't have it?”

← Shadow AI Proliferation · Regulatory Volatility

STANDARDS — ENACTED GOVERNANCE

Risk Data → Decision Change

When risk data reaches a decision-maker, something observably changes. If risk data circulates without altering behavior, governance is decorative. Test: name the last governance decision that changed because of risk data. If you can't, risk intelligence is absent.

Decision Under Acknowledged Uncertainty

Most AI risk data doesn't exist yet — failure modes are novel, precedent is thin. The standard: make explicit decisions under acknowledged uncertainty. “We don't know the risk profile; here's how we're proceeding; here's what we're watching.” Deploying without acknowledging the gap and freezing until certainty arrives are both governance failures.

AI Risk Literacy

Can the people making governance decisions read the risk landscape? Not technical risk (that's engineering) — organizational risk: reputational exposure, regulatory surface, business-model disruption. Risk literacy is a capability, not a compliance checkbox.

MEASURES — THREE-LAYER ASSESSMENT

Self-Report

“When did risk data last change a governance decision?” “What's your biggest AI risk where you lack adequate data?” Diagnostic: “I can't remember” + “we have it covered” = absent risk intelligence.

Evidence Layer

Decision change log: governance decisions modified by risk data in 90 days. Acknowledged uncertainty register: deployments with explicitly documented unknowns. Risk literacy scores. Incident response time + governance modification rate post-incident.

Behavioral Observation

When risk data is presented, does the room engage or glaze? When data is absent, does someone name the gap — or does the decision proceed as if certainty existed? The quality of the uncertainty conversation is the observable.

DIMENSION 4

Governance Intelligence

“The measurement system that governs the governance. The Five Dials™. Meta-governance — are the guardrails themselves working?”

← The Five Dials™ · Three-Layer Assessment

STANDARDS — ENACTED GOVERNANCE

The Five Dials™

Utilization Depth — integration into workflows, not adoption rate. Capability Expansion Rate — 1st-derivative: speed of new capability absorption. Trust Stability — RIST scores tracked over time. Iteration Velocity — governance response speed. Leadership Delta — gap between current leadership behavior and what AI demands.

Three-Layer Assessment Protocol

Every governance metric assessed at three layers: self-report, evidence, behavioral observation. The gap between layers IS the diagnostic.

Governance Iteration Cadence

Governance review aligned to model release cadence. If technology changes every 6 weeks, governance that reviews every 6 months is governing a system that no longer exists.

MEASURES — META-GOVERNANCE

Self-Report

“Is the governance system itself measured — or assumed to be working?” “When did we last change a protocol based on evidence it wasn't working?” Never revised = either perfect or unmeasured.

Evidence Layer

Five Dials dashboard: all five metrics tracked, trended, reviewed at cadence. Governance change log: protocols modified in last 90 days based on measurement data. Empty log = measurement disconnected from action.

Behavioral Observation

Does anyone reference Five Dials data in governance reviews — or is discussion anecdote-driven? “The data shows X, so we're changing Y” = governance intelligence enacted.

DIMENSION 5

1st-Derivative Talent

“Not what skills people have — the rate at which they acquire new ones. And at CAIO level: is the AI talent pipeline bleeding the best?”

← Epistemically Different · Velocity

STANDARDS — ENACTED GOVERNANCE

Rate of Acquisition, Not Inventory

Traditional talent governance measures what people know. 1st-derivative measures how fast they're learning. A team with moderate skills and a steep curve beats a team with strong skills and a flat one. Track the derivative, not the function.

Dynamic Role Architecture

Roles evolve as AI capability evolves — not static job descriptions frozen at hire date. When AI takes over 40% of a role's tasks, the role must transform — not just lose headcount.

Talent Pipeline Governance

At CAIO level: who is leaving and why? If top AI talent exits because governance is too slow or culture punishes experimentation, governance is eating its own seed corn. Retention of high-velocity learners: a governance metric, not an HR metric.

MEASURES — THREE-LAYER ASSESSMENT

Self-Report

“How much faster are you learning AI skills this quarter vs. last?” “Has your role changed in the last 6 months because of AI?” Self-assessed learning velocity, calibrated against evidence.

Evidence Layer

Capability expansion rate (Five Dials). Upskilling velocity: new capabilities per quarter per team. Top AI performer retention rate. Role evolution: % of roles materially redefined in 12 months. Attrition analysis: are fast learners staying or leaving?

Behavioral Observation

Are people experimenting with new AI tools — or using the same ones the same way as 6 months ago? Learning velocity is visible in tool adoption patterns, workflow evolution, and the quality of questions asked. Stagnation is equally visible.

DIMENSION 6

Strategic Coherence

“Strategic coherence is as important as strategic excellence.” — Paul Gibbons

← Complication · Stack Sprawl

STANDARDS — ENACTED GOVERNANCE

Whole-System Visibility

Someone has sight of the full AI landscape: agents, models, risk exposure, talent pipeline, strategic alignment. The CAIO dashboard is the governance instrument — where CTO data governance, CHRO talent data, and risk-function exposure maps converge. The CAIO doesn't own data infrastructure (CTO/CIO does). But the CAIO owns the question: are we locked into SaaS data moats? Is data portable? Data governance is the CTO's job; data strategy as it constrains AI adoption is the CAIO's.

Organizational Integration

Is the agent marketing built talking to the agent ops built? Is there a learning loop where one team's discovery accelerates another's? The CAIO's unique problem: the connective tissue of adoption. Pockets of excellence that don't connect are not a strategy — they're a coincidence.

Cross-Dimension Coherence

The five other dimensions must cohere. Decision rights contradicting agent authority = confusion. Talent strategy disconnected from risk intelligence = blind spots. Are six dimensions pulling together — or optimizing locally while the system fragments? Every governance decision traceable to a strategic objective; if it can't explain why it exists, it's bureaucratic accretion.

MEASURES — THREE-LAYER ASSESSMENT

Self-Report

“Can anyone describe the full AI governance picture?” “Do you know what other teams are building with AI?” If every team describes their initiatives but no one describes the system, coherence is absent.

Evidence Layer

CAIO dashboard: exists and integrates all six dimensions? Cross-team integration map. Organizational learning velocity: time from one team's discovery to another's adoption. Cross-dimension contradiction audit. Data portability score. Vendor lock-in assessment: SaaS moat exposure across AI estate.

Behavioral Observation

Does AI governance review happen as an integrated whole — or does each dimension present separately with no synthesis? “But that contradicts what we decided in risk governance” = coherence working. No one notices the contradiction = coherence absent.

INTELLECTUAL PROVENANCE

Paul Gibbons' Governance Reading List

The scholarship that built this framework

The Nature of Technology (2009)

Combinatorial evolution — technologies building on technologies. The intellectual foundation for agentic proliferation and agent chain governance.

The Rapid Adoption of Generative AI (2024, rev. 2025)

By late 2024, nearly 40% of working-age adults used generative AI. The empirical anchor: the issue is no longer awareness but scaling, governance, and redesign.

AI's Use of Knowledge in Society (2025)

AI redistributes expertise and changes where judgment lives.

Generative AI at Work (2023)

AI diffuses best practice and accelerates capability building.

Reshuffle (2025)

AI reorganizes coordination, not just tasks.

A Field Experiment on Generative AI Reshaping the Nature of Expertise (2025)

AI alters collaboration and functional boundaries, not just productivity.

Regulation (EU) 2024/1689 — Artificial Intelligence Act

Risk-based regulatory framework for AI systems in the EU. Broad enforcement begins August 2, 2026. The compliance baseline every governance framework must now be built against.

The Ethics of AI (2023)

Calibrating human oversight to AI reliability. The philosophical backbone of D2 Agent Authority.

Adopting AI: The People-First Approach (2025)

A human-centered approach to AI strategy, adoption, and ethics. The accessible companion to the Adaptive Adoption™ framework.

The AI Reckoning: How Boards Can Evolve (December 2025)

AI-posture model, six board governance actions. The best board-briefing companion for CAIO governance committee design.

Governing the Commons (1990)

Polycentric governance — overlapping authority structures outperform centralized control for complex shared resources.

The Fifth Discipline (1990)

Systems thinking — the discipline of seeing wholes. Foundation for D6 Strategic Coherence.

Antifragile (2012)

Governance that benefits from disorder. The aspiration for D3 Risk Intelligence.

Advancing Responsible AI Innovation: A Playbook (September 2025)

Nine plays for governance design. Strongest 2025 treatment of where governance sits, separating delivery from assurance, and moving from centralized to federated models.

The pivot from governance-as-brake to governance-as-accelerator. The strategic case that well-designed governance enables speed — the argument this framework is built on.

The Age of Surveillance Capitalism (2019)

Governance implications of data extraction at scale. Why autonomy is a governance question, not a technical one.