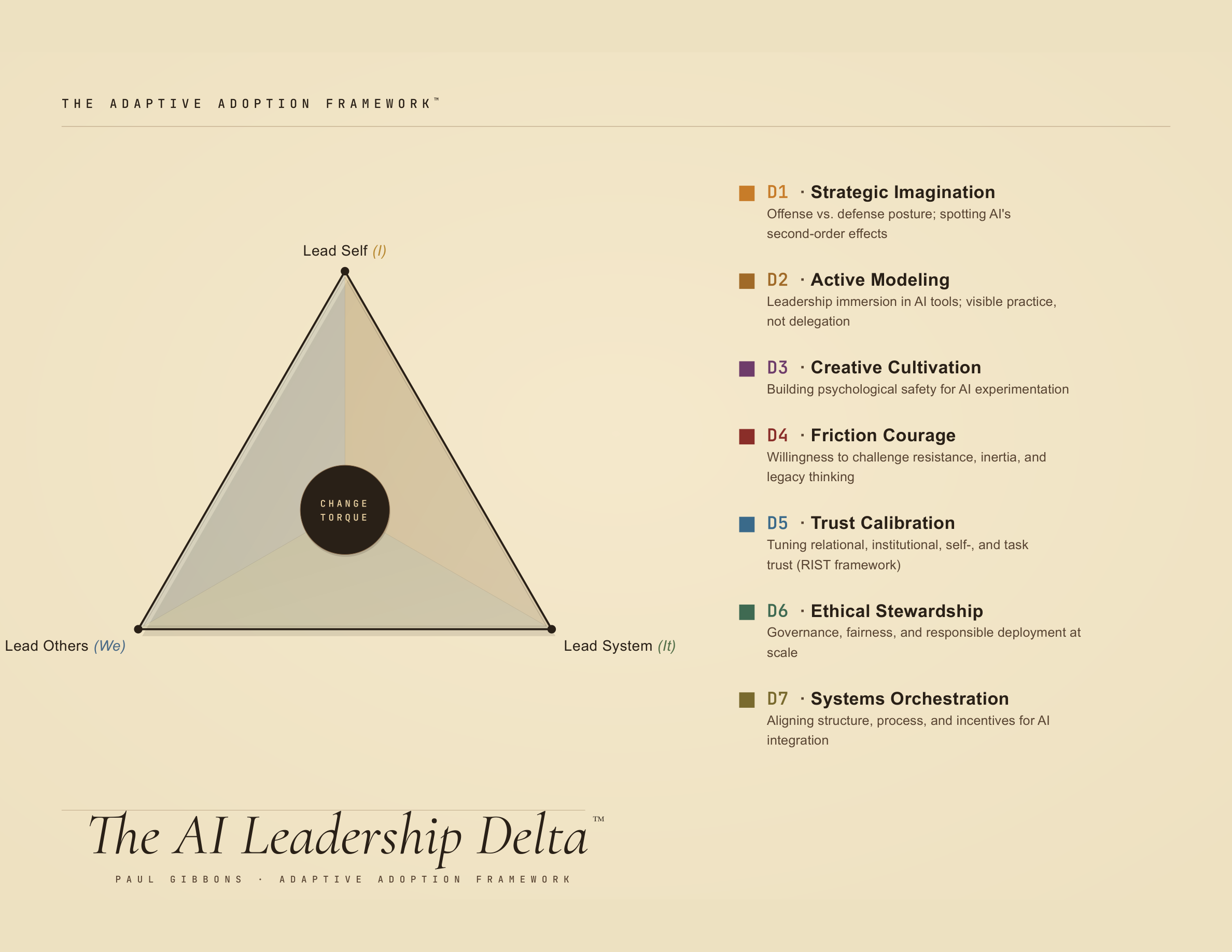

ADAPTIVE ADOPTION™ — AI LEADERSHIP DELTA™

The Leadership Delta

Calling someone a sponsor doesn't make them a leader. Calling someone a change champion doesn't make them a leader. Leadership isn't boxes or titles.

Most AI leadership advice is old leadership theory with a fresh coat of AI paint. See if this is really different.

THE PROBLEM

The Specific Leadership Problems AI Generates

- Leaders can no longer govern from distance. If they do not use the tools themselves, they cannot judge what is real, what is possible, or what is dangerous — nor empathize with the frustrations teams are feeling, nor experience the potential viscerally.

- Judgment gets harder, not easier. AI produces fluent answers at speed; the leadership job is no longer confidence, but calibration.

- Strategy becomes unstable. Capabilities shift monthly, competitive advantage decays faster, and waiting is itself a strategic choice.

- Experimentation becomes leadership work. If leaders do not model learning in public, the organization retreats into caution, theater, or shadow AI.

- Expertise gets inverted. Leaders now depend on people below them for technical truth, while still carrying accountability at the top.

- Friction gets political. Legal, risk, IT, HR, and the business all pull differently; leaders must know which frictions to tear down and which are load-bearing.

- Trust becomes operational. Naming trust on a slide fixes nothing. Leaders must decide where trust should rise, where it should fall, and how to tell the difference.

- Ethical judgment becomes daily work. “Can we?” is easy. “Should we?” is now a Tuesday morning question.

THE RESPONSE

Why Leadership Delta Is Different

- Not a search-and-replace job. This framework starts from the claim that AI changes leadership itself, not just the agenda around it.

- Behavioral, not decorative. It focuses on what leaders actually do: what they model, what they permit, what they challenge, and what systems they create.

- Not a virtue list in a nice font. “Be visionary,” “be curious,” and “be ethical” are too vague to help anyone lead.

- Trust is treated as a live variable, not a slogan. Naming trust does not fix trust; calibration does.

- Ethics is not outsourced. Legal and compliance matter, but they cannot carry the moral burden of AI for the enterprise. Leadership Delta treats ethical judgment as a daily leadership task, not a governance appendix.

- Open source, not consultancy theater. The argument, tools, diagnostics, and methods are inspectable, usable, and designed to improve in the open.

- Part of a larger system. Leadership Delta sits inside Adaptive Adoption alongside innovation and behavioral governance; it is not a lonely box diagram pretending to explain the world.

When management ideas live in PDFs, they become rigid. The tools, diagnostics, and methods behind this framework are open and inspectable.

EXPLORE THE FRAMEWORKBelow: where the tools can be found, the high-level elements of the framework, and the source material it is based on.

THE LEADERSHIP DELTA FRAMEWORK

LEAD SELF

HABITS & PRACTICES

Ethos — character, discipline

LEAD OTHERS

BEHAVIORS & RELATIONSHIPS

Pathos — mobilizing, relating

LEAD SYSTEM

ARCHITECTURE & CONDITIONS

Logos — design, structure

DIMENSION 1

Strategic Imagination

“When they talk about the next quarter, they mean the next quarter century.” Offense, not defense. Building, not renting.

THE PROPHET

← Temporal Shear · Immersion Condition

LEAD SELF — HABITS & PRACTICES

Frontier Time

Protected, structured cognitive space where the question is: what does this technology make possible that we haven't imagined? Think Walks: 3-hour hikes, no operational talk, forced connection-making. The practice is making time. The constraint isn't vision — it's the quarterly clock that eliminates the space where strategic imagination happens.

Right Inputs Discipline

Having the right inputs before the protected time. Frontier developments, not exec summaries. Direct contact with what's shipping, not filtered briefings. Christensen's insight: incumbents don't fail because they can't see — they fail because the wrong inputs make the wrong strategy feel rational.

LEAD OTHERS — BEHAVIORS

Naming the Scared Money

Calling out incrementalism for what it is: fear disguised as prudence. Defending the existing position felt rational at RIM quarter by quarter — until it was fatal. The leader's behavioral job: say the uncomfortable thing in the room, then hold the arc when Q2 is soft and the board wants to flinch. Every earnings call is a test: collapse into the accountability clock or hold the generational narrative.

LEAD SYSTEM — ARCHITECTURE

AI-Enabled AI Strategy

The recursive loop: build AI systems that augment the organization's capacity to think strategically about AI. Research agents that surface frontier developments. AI-assisted scenario planning. The technology itself as the enabler of its own strategic adoption. If the leader is doing Frontier Time alone with a browser, the system has failed.

Frontier Time for Everyone

Structurally guaranteeing that protected strategic thinking time isn't a C-suite luxury. Time, permission, and tools — distributed. The team lead in operations needs Frontier Time as much as the CAIO. If only the top floor thinks expansively, the organization's strategic intelligence is a bottleneck, not a capability.

DIMENSION 2

Active Modeling

“An AI sponsor who doesn't use AI is a contradiction — not a sponsor.”

THE CARPENTER

← The Immersion Condition · Complexity

LEAD SELF — HABITS & PRACTICES

Build Week

Structured immersion: dedicated time building with AI tools, not reading about them. Bill Gates had Think Week (information). AI-era leaders need Build Week (experience). The input is contact knowledge, not briefings.

Tinker Time

Regular, hands-on building with AI embedded in real work. Not demos, not showcases — genuine making. Satya Nadella spending weekends with tools isn't about becoming a developer. It's about ensuring strategic judgment isn't epistemologically compromised. The leader who hasn't built with the technology hasn't earned their strong opinions about it.

LEAD OTHERS — BEHAVIORS

Public Learning

Visible, honest AI use — including public acknowledgment of failure. What leaders are seen doing is the policy that actually governs behavior, regardless of what the policy document says (Argyris & Schön, theory-in-use). Show the messy drafts, the failed prompts, the learning curve.

Earned Opinion Standard

Explicitly naming that strong opinions about AI require direct experience. The “fancy autocomplete” position, however intellectually elaborated, rests on armchair inference when held by people who haven't touched the technology. Modeling this standard publicly.

LEAD SYSTEM — ARCHITECTURE

Immersion Infrastructure

Organization-wide access to frontier tools, dedicated experimentation time (not “innovation Friday” theater), sandbox environments. If the system doesn't enable contact, the leader's modeling is performative.

Competence Visibility Systems

Mechanisms that make AI adoption visible without surveillance: team showcases, workflow-sharing platforms, “how I used AI this week” rituals. The system makes modeling contagious, not mandated.

DIMENSION 3

Creative Cultivation

The conditions that determine whether AI ideas survive first contact or die on arrival. Not a slogan — a selection pressure.

THE GARDENER

← Fear and Dissent

LEAD SELF — HABITS & PRACTICES

First-Response Discipline

Your first visible reaction to a new AI use case is the single strongest signal the organization reads. One dismissive response kills ten experiments. The practice: notice your default — curiosity or judgment — and correct before it lands. Ekvall's research is clear: leadership response to novelty is the highest-leverage predictor of whether ideas survive at all.

LEAD OTHERS — BEHAVIORS

Rewarding Intelligent Failure

Publicly rewarding well-designed experiments that failed. Conditions are set by what gets rewarded, not what gets announced. The question isn't “did it work?” but “was the experiment well-designed and did we learn?” If only successes get airtime, the organization learns to hide failures, and hidden failures compound.

Dissent as Signal, Not Threat

Treating organized resistance to AI as information, not insubordination. No prior enterprise technology generated a counter-movement. Nobody said “over my dead body” about SAP. The dissent is genuine and often principled — engage it or lose the signal.

LEAD SYSTEM — ARCHITECTURE

Safe-to-DO Infrastructure

Not just psychological safety to speak — structural safety to act. Budget lines for experiments with no required ROI projection. KPIs that measure learning rate, not just success rate. If the system punishes exploration, no amount of leadership rhetoric changes what people actually do.

Cognitive Diversity as System Design

The team that thinks the same way will adopt AI the same way — and miss everything the dominant pattern doesn't contain. System-level commitment to cognitive diversity: who is experimenting, who is benefiting, who is being left behind. Measure it quarterly or it's decorative.

DIMENSION 4

Friction Courage

The failure mode isn't stupidity — it's cowardice. They know the silos are broken. They don't act.

THE LIBERATOR

← Fear and Dissent · Complexity

LEAD SELF — HABITS & PRACTICES

Purge Self-Frictions

Pro-level self and time management as leadership prerequisite — and this means ruthless, not incremental. The exec who can't manage their own calendar, who says yes to everything, whose inbox runs them — that person cannot credibly liberate an organization from structural friction. You can't tell legal to take a hike if you can't tell your own meeting schedule to take a hike. Purge the life structures and habits that make you slow, scattered, and reactive. The courage to say no — to commitments, to meetings, to your own comfortable routines — is the first friction you break.

Courage Inventory

Monthly honest audit: “What friction do I know is broken that I haven't acted on — in my own work AND in the organization? What am I avoiding, and why?” The inventory separates strategic patience from cowardice. Most leaders know exactly where the broken frictions are. They lack the courage to act on either side.

LEAD OTHERS — BEHAVIORS

Break the Structures, Love the People

The paradox at the heart of this dimension. Welch-level willingness to destroy unproductive structures with genuine care for the humans inside them. The leader who breaks structures AND breaks people is a sociopath. The leader who loves people but won't break structures is a coward. Both are common. Neither works. Gut the silo, protect the person. Tell legal to take a hike, take the lawyer to lunch.

Naming the Cowardice

Calling out organizational cowardice — including your own — as a leadership act. “We all know this governance process is theater. Why are we still doing it?” The Fortune 500 default is to call friction “governance” and do nothing.

LEAD SYSTEM — ARCHITECTURE

The Dual Mandate — Protect AND Expand

The protect/break ratio is not fixed — it's calibrated to ambition. Low growth ambition: you can protect heavily, optimize incrementally, keep the frictions. High growth ambition: you have to break things, accept some casualties, move fast through discomfort. The organizational sin is having high ambition and a protect-heavy posture — wanting transformation outcomes with optimization behaviors. Tailored risk matched to real ambition.

Friction Audit Protocol

Systematic mapping of deliberate vs. accidental frictions. Deliberate frictions (ethical review, security checks) are load-bearing — protect them. Accidental frictions (legacy approval chains, silo-driven handoffs, compliance theater) are structural debt — destroy them. If removing a blocker requires a committee, the committee is the blocker.

DIMENSION 5

Trust Calibration

Trust is not a feeling to manage — it is a four-dimensional dynamic to calibrate. Continuously.

THE EMPATH

← Fear and Dissent · Simultaneous Under/Overtrust

LEAD SELF — HABITS & PRACTICES

Self-Trust Audit — Adaptive Self-Efficacy

The S in RIST. The question isn't “Am I competent with AI?” — it's “Am I confident in my ability to learn AI?” First-derivative talent: your rate of learning matters more than your current knowledge. A bad experience with an early model that calcifies into “AI can't be trusted” was reasonable in 2022. If unchanged in 2026, it's career-ending. The practice: recalibrating confidence continuously — not in what you know, but in your capacity to close the gap.

Know Your Trust Defaults

Every person arrives at trust decisions carrying priors — emotional, cultural, biographical. AI's language, tuned for helpfulness and warmth, triggers trust responses uncalibrated to actual capability. Skeptics undertrust despite strong performance. Deferrers overtrust despite significant limitations. Neither response is calibrated. Both feel like judgment. The practice: know which one you are.

LEAD OTHERS — BEHAVIORS

Diagnose Before Prescribing — The RIST Diagnostic

Most trust interventions fail because organizations treat the symptom (low adoption) rather than the specific trust dimension that has broken. RIST identifies four: Relational, Institutional, Self-Trust, Task Trust — each requiring a different intervention. Applying the wrong one wastes time and signals incompetence — which makes trust worse. Diagnose which dimension has actually broken before prescribing.

Trust Is Behavioral, Not Communicative

Stop treating trust as a communications problem. Words without deeds destroy trust faster than silence. The leader who announces “we're building trust” while running nine-month legal review cycles for AI tools is not building trust — they're performing it. Every tool in the RIST toolkit is behavioral: what you do, not what you say.

LEAD SYSTEM — ARCHITECTURE

Consequence-Based Trust Tiering

The T in RIST — and the only dimension most organizations manage. Task trust calibrated by consequence, not by anxiety: low stakes, use freely; high stakes, expert review required, evidence trail documented. Undertrust is the expensive failure — productivity foregone. Overtrust is the dangerous failure — output laundering, where AI generates and a human signs off without meaningful review. Both are managed simultaneously, by design.

Assume Fallibility, Not Bad Intent

Permission gates and approval cycles don't just slow AI adoption — they kill the experimentation that makes adoption valuable. The deeper cost is talent: people who feel unblocked build; people who feel untrusted comply — minimally, defensively, without the curiosity that makes AI adoption work. Design systems where well-intentioned humans can succeed safely — where errors are caught because the architecture makes catching errors easy, not because someone is watching.

DIMENSION 6

Ethical Stewardship

Ethics isn't compliance and it isn't moralizing — it's phronesis: the practical wisdom to navigate novel moral waters where the rules don't exist yet.

THE HELMSMAN

← Ethical Volatility · Ethical Fading

LEAD SELF — HABITS & PRACTICES

Phronesis — The Helmsman's Judgment

Episteme is knowing the charts and the weather systems — the ethical frameworks. Techne is sail handling and navigation — applying rules to cases. Phronesis is the practical wisdom that sits above both: Is this wind shift temporary or a front? Do I reef now or hold course? The leader with episteme and techne but no phronesis knows the compliance rules and can operate the technology but lacks the judgment to ask “just because we can, should we?”

Ethical Fading Awareness

The discipline of noticing when moral questions get reclassified as “just business decisions.” Ethical fading is the gradual, invisible process by which the ethical dimension disappears from view — the ROI model that strips out the displacement impact, the “alignment” initiative that's actually coercion with a friendly name. The practice: regularly asking “what are we not seeing because we've framed this as a business problem?”

LEAD OTHERS — BEHAVIORS

Ethical Judgment Without Moralizing

The word “ethics” has almost no currency at board level. The actual skill: offering clear moral judgments — this is right, this is wrong, this creates harm — without rancor, without condescension, in the language of the room. The concrete move: knowing when this is a utility question (what outcome?), when it is a rights question (what lines?), when it is a character question (who are we becoming?) — and selecting the right lens for the room you are in.

Red Line Visibility

Publicly naming what you will not do — and accepting the cost. The leader who says “we won't ship this even though it's profitable” has done more for ethical culture than a hundred policy documents. The red line must be visible and costly to be credible. This is the behavioral opposite of ethical fading: making the moral dimension of decisions more visible, not less, especially under pressure.

LEAD SYSTEM — ARCHITECTURE

Anti-Fading Architecture

Ethical fading isn't individual weakness — it's what systems produce when no one designs against it. The system-level response: decision templates that force the ethical dimension to remain visible — pre-mortems that include “who could this harm?” as a required field. The goal is not more ethics committees — it's making it structurally harder for the moral dimension to disappear from view.

Ethical “Stop Cord”

Frontline veto power on AI deployment — anyone can pull the cord, and pulling it is celebrated, not punished. Distributed ethical authority rather than concentrated committees that move too slowly. The stop cord works because it assumes the people closest to the work see things the hierarchy cannot. It is the system-level expression of phronesis: practical wisdom distributed, not hoarded.

DIMENSION 7

Systems Orchestration

The conductor produces coherent music from incommensurable instruments without playing any of them. Inverted expertise.

THE CONDUCTOR

← Stack Sprawl

LEAD SELF — HABITS & PRACTICES

Epistemic Humility Practice

Cultivating comfort with knowing less than the people you lead — permanently, not temporarily. Every prior leadership model assumes the leader has or can acquire superior knowledge. Stack Sprawl makes this impossible. The practice is learning to lead from a position of curated ignorance.

Cross-Domain Literacy Habit

Regular shallow-but-wide engagement across incommensurable domains: infrastructure, models, orchestration, applications, enterprise, physical. Not mastery — pattern recognition. The conductor reads every score, plays no instrument.

LEAD OTHERS — BEHAVIORS

Translation Between Ontologies

Active mediation between teams that literally speak different languages: data engineers, UX designers, legal, ethics, frontline users. The leader's value is making distributed knowledge legible across boundaries — asking the right questions of people who know more than you in their domain.

Knowing When to Override vs. Defer

The judgment call that defines orchestration: when to trust the specialist and when to override. The conductor who always defers produces cacophony. The conductor who always overrides suppresses the distributed expertise that is the only adequate response to the stack's complexity.

LEAD SYSTEM — ARCHITECTURE

Integration Architecture

Organizational design that makes cross-domain collaboration structural, not heroic. Shared ontologies, translation layers between teams, governance that connects rather than silos. If integration depends on one leader's relationship capital, it's fragile.

Distributed Expertise Model

Reward systems, team structures, and decision rights designed for inverted expertise: the leader who insists on domain mastery before acting will be perpetually behind. Build the team as the instrument. The system's intelligence is the orchestra, not the conductor.

INTELLECTUAL PROVENANCE

Paul Gibbons' Leadership Reading List

The books that built this framework

Organizational Learning

Single-loop vs. double-loop learning. Leaders who learn about AI without learning from AI are single-looping.

Nicomachean Ethics

Phronesis — practical wisdom as the meta-virtue. The episteme/techne/phronesis distinction is the backbone of D6.

Blind Spots

Ethical fading — the invisible process by which moral dimensions disappear from business decisions.

Leadership (1978)

The original theorist of transformational leadership. The entire Delta is a transformational claim.

The Innovator's Dilemma

Why incumbents fail. The disruption logic behind D4's Dual Mandate — protect AND expand.

On War

Fog of war as analogue for the AI stack's opacity. The offense/defense strategic posture.

Shop Class as Soulcraft

The dignity and epistemology of working with your hands. You learn by making, not by reading about making.

Skill Acquisition

Novice-to-expert progression. Expertise is embodied, not propositional. Informs the Immersion Condition.

Thinking in Bets

Decision quality vs. outcome quality. The confidence interval discipline as practiced speech.

The Fearless Organization

Psychological safety — necessary but insufficient. D3 adds: safe to DO, not just safe to say.

Creative Climate Research

Ten dimensions of organizational creative climate. The empirical foundation for D3 Creative Cultivation.

Source Code (biography)

Think Week — protected cognitive space for strategic reading. Direct ancestor of Frontier Time, upgraded from information to immersion.

Integral Leadership (1999), in Work and Spirit

Early application of integral theory to leadership development — the philosophical architecture for the whole-person, whole-organization approach that runs through the Delta.

The Science of Organizational Change (2015, 2019)

The argument that change management must be rebuilt on behavioral science, complexity, trust, and ethics — not persuasion models from the 1940s. The intellectual progenitor of the Adaptive Adoption™ framework.

Tragedy of the Commons (1968)

The default outcome when AI governance is unmanaged — shared resources degraded by uncoordinated individual optimization.

Adopting AI: The People-First Approach (2025)

A human-centered approach to AI strategy, adoption, and ethics. The accessible companion to the Adaptive Adoption™ framework.

Leadership Without Easy Answers

Technical vs. adaptive challenges. The most dangerous failure: treating adaptive problems with technical solutions.

The Innovators; biographies of Jobs, Einstein, da Vinci

Leadership understood through enacted life, not abstracted principles. The biographical method as leadership pedagogy.

Thinking, Fast and Slow

Dual-process theory. Trust defaults and automation bias in D5 are System 1 failures in a System 2 domain.

Profiles in Courage

Political courage as the willingness to sacrifice career for conviction. The leadership failure mode is not ignorance but cowardice.

Adaptive Markets

Rationality is context-dependent, not fixed. Markets — and organizations — adapt or die.

The (Mis)Behavior of Markets

Fat tails, not bell curves. The fractal geometry of risk underpins the rejection of neat planning models.

Thinking in Systems

Leverage points and feedback loops. Where you intervene in the system matters more than how hard you push.

A Question of Trust (Reith Lectures)

Calibrated trust — not more trust, but more trust in the trustworthy. The critique of transparency-as-trust.

Governing the Commons

Polycentric governance — nested rules, distributed authority. The alternative to both centralized control and tragedy.

The Tacit Dimension

We know more than we can tell. The Immersion Condition: strategic judgment requires direct experience, not reports.

The Reflective Practitioner

Reflection-in-action. How professionals actually think in practice, not in theory.

The Signal and the Noise

Probabilistic thinking and the signal/noise distinction. The calibration discipline behind D5.

Cynefin Framework

Domain distinctions — simple, complicated, complex, chaotic. Matching the intervention to the domain.

Complexity and Management

The Three-Body Problem condition. Complicated is expert-solvable; complex is emergent and unpredictable.

Antifragile; The Black Swan; Skin in the Game

Systems that gain from disorder. Skin in the game as the prerequisite for consequential judgment.

Theory of Games and Economic Behavior

He chose poker over chess as game theory's foundation. Incomplete information is the real game.

Integral Theory

The I-We-It framework. Four quadrants simplified to three domains: Lead Self, Lead Others, Lead System.

Ethics and the Limits of Philosophy

Moral theory alone is insufficient for practical life. The philosophical case for phronesis over theory.

Gibbons original IP in this framework: The Six Conditions (Stack Sprawl, Complexity/Three-Body Problem, The Immersion Condition, Temporal Shear, Ethical Volatility, Fear and Dissent). The RIST Trust Framework™ (Relational, Institutional, Self-Trust, Task Trust). The I-We-It meta-architecture applied to leadership dimensions. Frontier Time. The Dual Mandate. The offense/defense strategic posture. First-derivative talent. The concept of ethical fading as system output. The entire AI Leadership Delta™ structure, naming, and operational content. All seven archetypes. The claim that change management as a discipline has not yet caught up with the demands of the AI era — and the attempt to close that gap.

THE APPLIED LAYER

Where This Was Applied

Three decades of leadership development, from boardrooms to the C-suite

1997

First Leadership Development Program

Board and top-100 leadership program for a regional bank in the UK. The starting point: leadership as practiced craft, not classroom theory.

2000

Founder & CEO — Future Considerations

Leadership development consulting firm competing with Duke, Harvard, and INSEAD for Fortune 500 executive education. Ranked #1 leadership boutique by Leadership Excellence Magazine. Gibbons named CEO “super-coach” by CEO Magazine.

HSBC · KPMG · PwC · Barclays · Shell · BP · Anglo-American · Microsoft · Zappos · Comcast

2010

Adjunct Professor & Keynote Speaker

Business ethics and leadership. Author of The Science of Organizational Change (2015, 2019) — the argument that change management needed to catch up with behavioral science, complexity, and trust.

2020

Partner — IBM Consulting

Accountable for thought-leadership in organizational leadership, culture, and change. The mandate: fill the gaps left by 20th-century change ideas — build trust, behavioral science, and culture into how the world's largest enterprises approach transformation.

2025

Paul Gibbons Advisory — Adaptive Adoption™

AI adoption advisor to Fortune 500 leadership. Author of Adopting AI (2025). The AI Leadership Delta™ is the applied output of everything above — built in boardrooms, pressure-tested with executives, grounded in three decades of watching what actually changes leadership behavior and what doesn't.