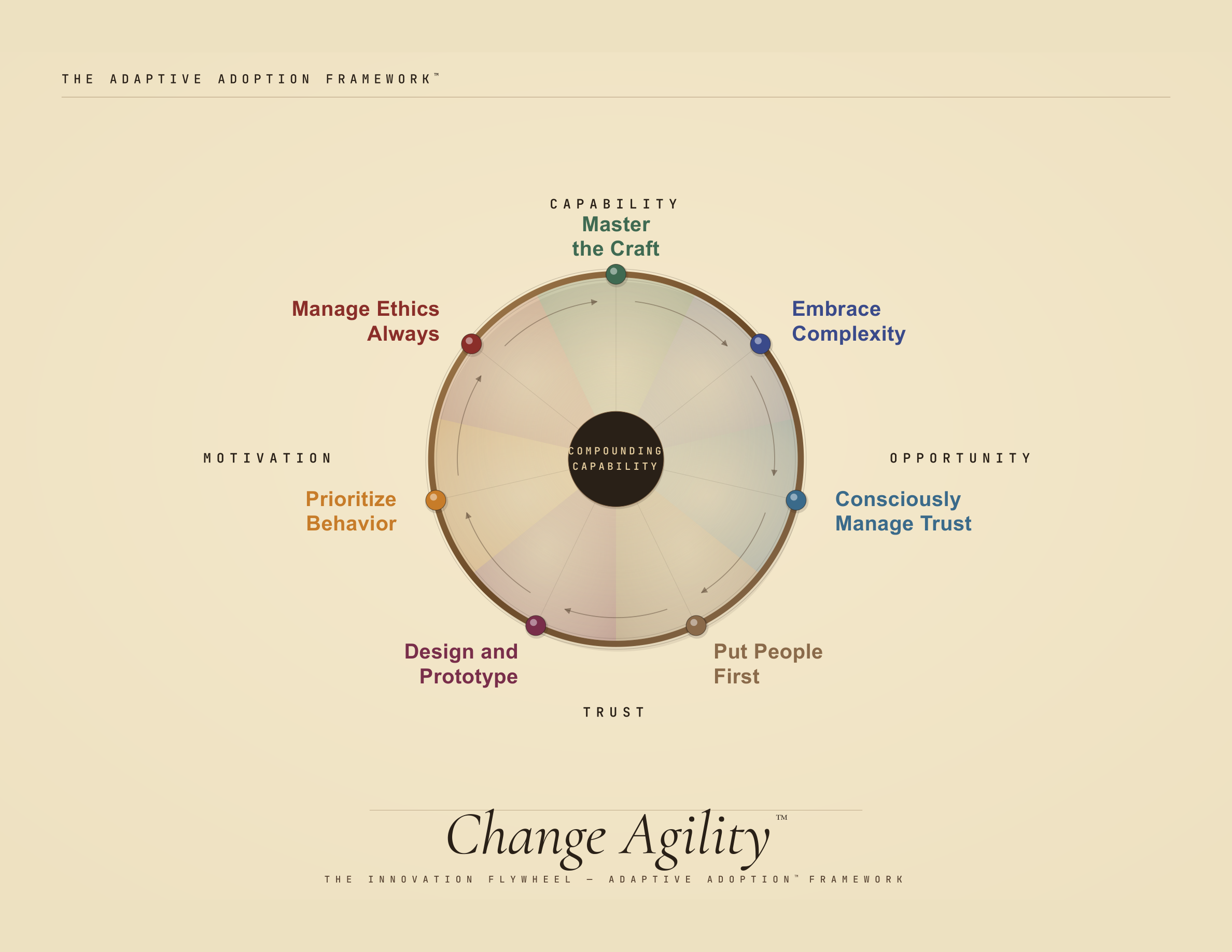

Change Agility™

The Specific Adoption Problems AI Creates

- Change is treated as a project, not a capability. Organizations run change as discrete initiatives with start dates and end dates. AI doesn't end. The organizations that win will be the ones that build permanent adaptive capacity — not the ones that execute the best rollout plan.

- People are treated as the last mile. Traditional change cut the check, bought the tech, and persuaded people to use it. With AI, the human is the integration layer, not the end-user.

- Traditional training never worked well. Some experts put "training transfer to the job" as low as ten percent. AI is more like carpentry than calculus — you have to build with it to learn it. And the half-life of AI knowledge is about three months.

- Complexity defeats planning. AI adoption is a complex adaptive system — three bodies in motion (humans, organizations, a non-human agent) producing emergent patterns no plan can predict.

- Trust is undiagnosed. Organizations know trust is low but treat it as a communications problem rather than diagnosing which of four trust dimensions has actually failed. Under-trust and over-trust operate simultaneously, requiring entirely different interventions.

- Prototyping is absent. Change management assumes you know the destination. AI moves too fast to define a future state; you must design and iterate your way toward it.

- Persuade then pray. Superb communication, education, and inspiration — followed by waiting for behavior to inevitably follow. It doesn't. In the lab of their own lives, people realize that good intentions matter less than behaviors — but in business, the intention-action gap is a chasm.

- Ethics is outsourced to compliance. A "short list" of AI ethical issues runs to forty items. No committee meets fast enough to adjudicate them in real time.

Why Change Agility Is Different

- Agility as infrastructure. Change Agility is not a program you run — it is a capability you build. Seven pillars, each with tools, processes, behaviors, and skills that compound over time. The flywheel, not the project plan.

- People-first sequencing. Invert the order: begin with tools and workflows that serve the human, that augment rather than automate. You get to the efficiency gains faster by not starting with them.

- Craft, not curriculum. AI is not a language you learn; it is a system you architect. The unit of learning is the community of practice — people learning together, in public, through doing.

- Complexity-native. Probe-sense-respond replaces plan-and-execute. Designed for emergence, not control.

- Trust as a named variable. Four trust dimensions (Relational, Institutional, Self-Trust, Task Trust), each with distinct failure modes and distinct interventions. Naming it is not fixing it; calibration does.

- Design thinking for change. Build–Measure–Learn applied to organizational change itself. Every initiative is a prototype until evidence says otherwise.

- Behavior before attitude. Change the environment, change the behavior; the mindset catches up. Behavioral science provides tools for understanding, changing, and measuring change.

- Active ethics. Ethical business isn't about having better surveillance — it is about the whole workforce saying: I know we can, but should we?

- Integrated, not isolated. Change Agility works alongside Leadership Delta and Behavioral Governance — a new approach to leadership and governance designed for the AI era. The three frameworks are mutually reinforcing and designed to be deployed together.

When management ideas live in PDFs, they become rigid. The tools, diagnostics, and methods behind this framework are open and inspectable.

EXPLORE THE FRAMEWORKBelow: the high-level elements of the framework, with a single example tool, process, behavior, and skill per pillar.

THE CHANGE AGILITY FRAMEWORK

Behavioral Science — Diagnostics and Drivers (adapted from COM-B)

Skills, tools, knowledge, and access. The most common misdiagnosis: assuming motivation is the problem when people literally don't know how.

Incentives, purpose, identity. Extrinsic rewards get compliance. Intrinsic motivation — autonomy, mastery, purpose — gets adoption.

The four-dimensional trust problem. Relational, Institutional, Self-Trust, and Task Trust each fail differently and require different fixes.

Friction, access, time, governance. The org that blocks AI tools behind 9-month legal review has diagnosed its own failure before it begins.